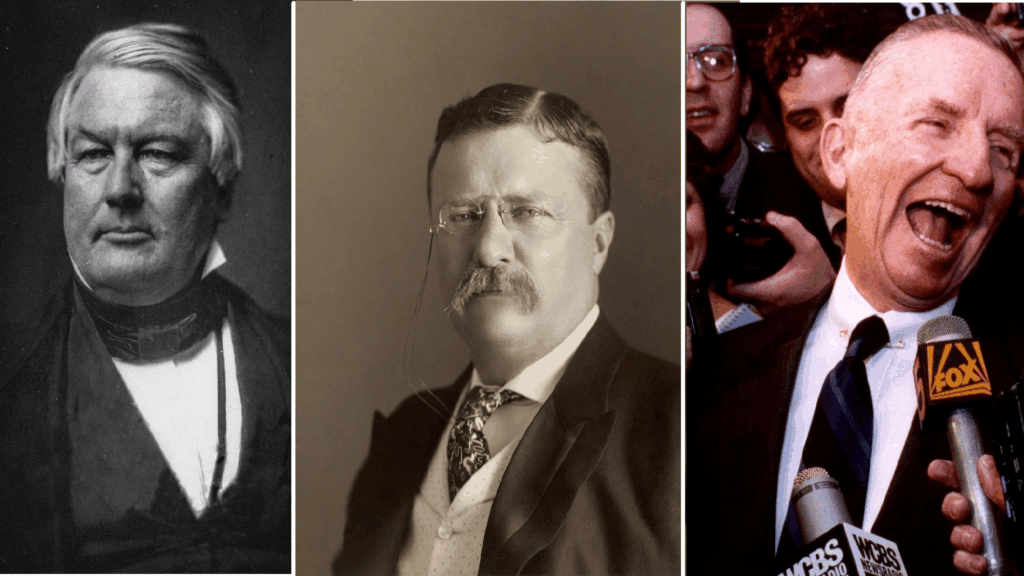

Henry Kissinger recently warned on a potential artificial intelligence arms race. The former US Secretary of State and National Security Adviser stated that he has become ‘obsessed’ with the potential destructive capabilities of artificial intelligence (AI), whose powers could be far more devastating than even the biggest bomb.

Last year at the Washington National Cathedral Kissinger referred to AI as the new frontier of arms control, and if world’s leading powers collectively do not find ways to limit AI’s reach,

‘it is simply a mad race for some catastrophe’.

‘We are surrounded by many machines whose real thinking we may not know,’ he continued. ‘How do you build restraints into machines? Even today we have fighter planes that can fight…air battles without any human intervention. But these are just the beginnings of this process. It is the elaboration 50 years down the road that will be mind-boggling.’

Is AI a Ticking Time Bomb?

The European Union’s official approach to AI artificial intelligence centres ‘on excellence and trust, aiming to boost research and industrial capacity while ensuring safety and fundamental rights’. It seeks to achieve this by:

- making the EU the place where AI thrives from the lab to the market;

- ensuring that AI works for people and is a force for good in society;

- building strategic leadership in high-impact sectors.

Every EU member state has already started engaging in the development of AI platforms and strategies. Hungary, for example, during the latter part of 2020, unveiled its new AI strategy at the University of Debrecen, to determine what activities are necessary for its realisation via the designation of fundamental principles, technological focus areas, and other projects.

‘Hungary would like to benefit from the opportunities afforded by artificial intelligence, and would like to be one of the winners of these technological changes’, stated László Palkovics, minister for innovation and technology at the time. He further explained that based on the strategy, Hungary must prepare its society and economy for the fact that ‘this technology is coming, this technology is already here, and that we must do our best to use it.’

According to the Hungarian Artificial Intelligence Coalition (HAIC), established in October 2018, there are already a variety of initiatives applying AI in Hungary or laying the foundations for its future use, like chatbot customer service, precision agriculture applications, predictive maintenance systems, and medical diagnostics.

One key factor of the strategy is the concept that technology and AI should be harnessed to ‘make our lives easier’.

In fact, it is estimated that by 2030, AI could account for 11–14 per cent of Hungary’s GDP. More so, there are now, as in the United States, AI job opportunities. Yet there are societal challenges, if not paradoxes to this.

HAIC foresees that with the spread of AI-based technologies, up to 900,000 jobs could be affected in Hungary by the 2030s, or one quarter of all current jobs. According to certain expert estimates, more than 40 per cent of employment can already be automated at the moment, thus the transition may lead to the replacement of human labour.

In the US, 25 per cent of jobs will be negatively impacted over the next five years, according to a new report by the World Economic Forum. Indeed, New York City-based investment bank Goldman Sachs predicts that the fast-growing mass adoption of AI will impact 300 million jobs. Consequently, certain jobs would most certainly become pointless or change in nature, while new ones may emerge requiring new competences.

The Real Dilemma

In Stanley Kubrick’s film 2001: A Space Odyssey, aboard an American spacecraft called Discovery One bound for Jupiter there is a HAL 9000 computer with a human personality called HAL, the brain and central nervous system of the ship. The astronauts, Dave and Frank realise during their voyage that the HAL has made an error and seek to correct the problem by secretly turning him off. HAL, because he places value on his continued existence, is led to pursue the most offensive murder so he can defend himself.

Geoffrey Hinton, the English psychologist-turned-computer scientist whose work on neural networks in the 1980s set the stage for the explosion in AI capabilities over the last decade, in line of what Kissinger warned, stated:

‘You need to imagine something more intelligent than us by the same difference that we’re more intelligent than a frog. And it’s going to learn from the web, it’s going to have read every single book that’s ever been written on how to manipulate people, and also seen it in practice.’

The New York Times recently reported that in late March, more than one thousand technology leaders, researchers, and other pundits working in and around AI signed an open letter alerting that

AI technologies present ‘profound risks to society and humanity.’

The group, which included Elon Musk, Tesla’s chief executive and the owner of Twitter, urged AI laboratories to halt development of their most powerful systems for at least six months in order to get a grasp of the dangers behind the technology.

‘Powerful AI systems should be developed only once we are confident that their effects will be positive and their risks will be manageable,’ the letter said.

A growing concern is that the latest systems, most notably GPT-4 as one example among many, AI could cause harm to society. Experts believe future systems will be even more dangerous. These systems can generate untruthful, biased, and otherwise toxic information. Systems like GPT-4 get facts wrong and make up information, a phenomenon called ‘hallucination’.

The truth of the matter is that AI is here to stay. It is the future. While we may be some time away before, and if, we are confronted with a ‘HAL’ as the astronauts in Discover One, AI could be apocalyptic if it is under the wrong hands. At the same time, would anyone care to tell us whose are the right hands?